Back catching Xenopus in Tucson, Arizona

In 1995, during my PhD at Bristol University, I visited Tucson Arizona to study the population of African clawed frogs at the Arthur Pack Desert Golf Course. The population is known to have dated to the late 1970's when a local academic seeded many impoundments in southern Arizona with Xenopus hoping that he could pick up breeding animals in the future for selling on. It seems that most of the introductions were failures, but at this one golf course the population took hold.

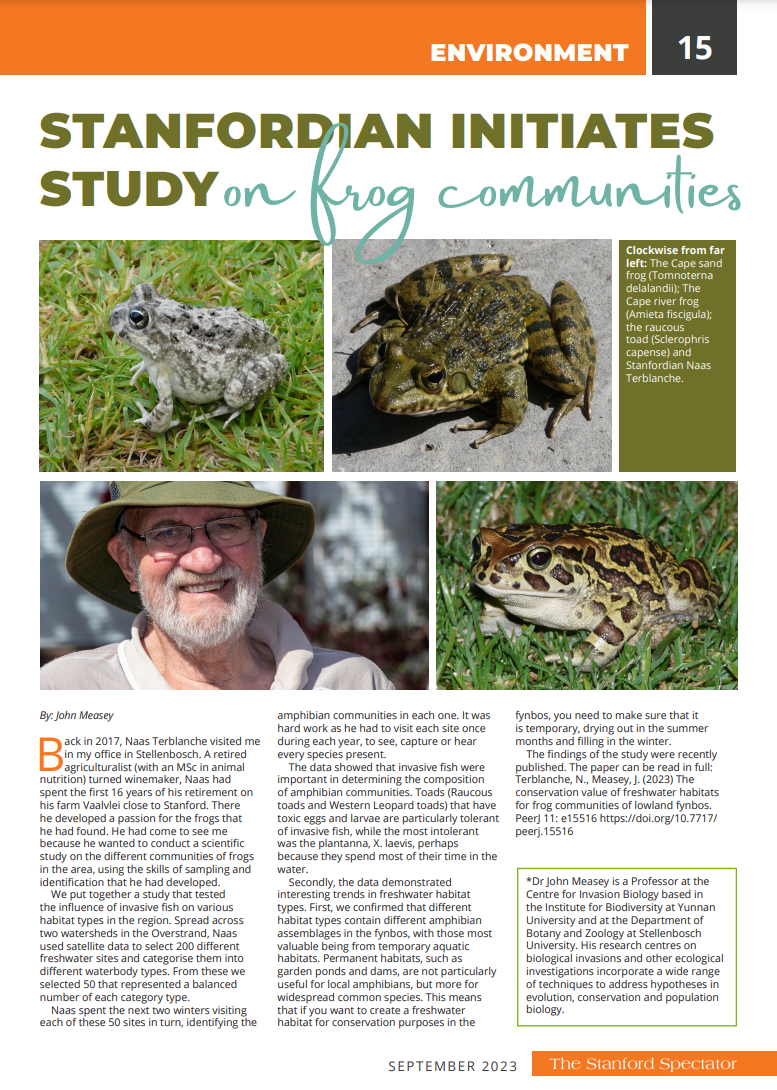

Back in 1995, it was really hot when I visited this site, reaching 47 C on one of the days that I was there. That's still the hottest I've ever experienced.

I've long wanted to return to the site, and so I made it a priority on my North American leg of my world Xenopus tour.

The picture above is from 1995 and is the southernmost lake on the golf course, featuring a canoe which I was allowed to use to paddle around this lake and set traps.

This is the same site now. The willow tree is gone, but you can see the same wall above the lake.

The canoe is still around and once again I was allowed to use it and paddle out to a pole in the deepest part of the northernmost lake to set some temperature loggers.

I remember the last time I sat in that canoe in 1995 a golf ball whizzed passed my ear making me very pleased that I was wearing a hard hat.

With the help of Becca Cozad and her ARC crew: Karen & Maya, we were able to quickly run through the catch and process all the animals needed from Arizona. We were also joined by Randy Babb who bought his seine net which made really short work of bringing in a lot of tadpoles for sampling.

A special thank you to Brian Stevens and all his staff for making us so welcome at the Crooked Tree Golf Course. They really pulled all of the stops out and went out of their way to make sure that this was a successful mission. Strapping a canoe to the top of a golf cart and driving it across the course was unforgettable. We really are most indebted to them all.