The southwestern Cape is getting warmer and drier - bad news for frogs

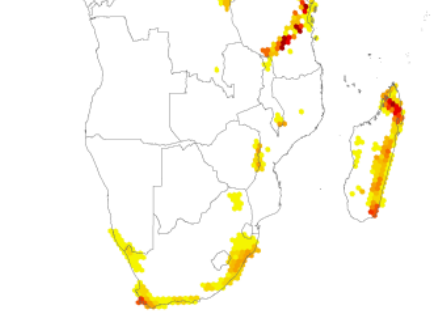

Many of you will be aware that the extreme southwestern Cape of South Africa is a frog biodiversity hotspot. There are four endemic genera and 36 endemic species that occur in the fynbos alone (see Colville et al.2014). It is also a hotspot where many species are threatened, as shown in the following image from Angulo et al. (2011). Reasons for the threats include habitat transformation and invasive species, but new data from the IPCC suggest what many of us have been thinking that the climate is getting worse for amphibians in this area.

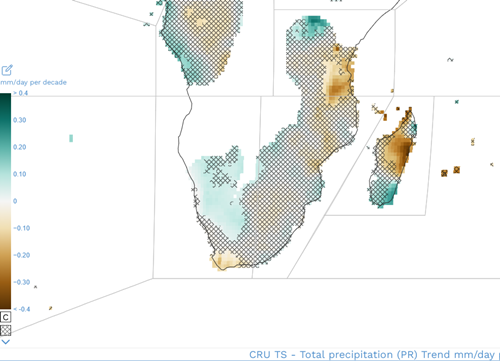

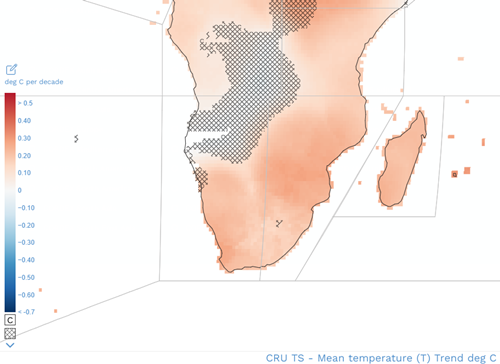

Frogs from this area breed in seasonal temporary vernal pools, puddles or have direct-developing tadpoles that rely on seepages and moist environments. A combination of reduced rainfall and increased temperatures in this region is therefore bad news for frogs.

This area of the world is also under high anthropogenic pressure. The City of Cape Town has expanded to include ~4.6 million people, all of whom require food grown in the lowland areas North and East of the city. Species in the mountains are generally impacted by invasive plants such as pines and acacias.

The new IPCC WGI interactive Atlas (https://interactive-atlas.ipcc.ch/) with regional information allows users to plot mean temperatures and mean precipitation per decade from 1980 to 2015. The trended data demonstrates that this region of the world is getting warmer and drier. This is within living memory, and chimes with comments from the community that frogs are becoming generally less abundant when walking in the mountains. According to Barry Rose, who as a boy in the 1950s collected frogs for his grandfather, when walking in the mountains of the Cape peninsula he saw an abundance of frogs that would jump out of his path as he moved through the fynbos. Now they seem to be far fewer.

We have also seen this trend in our research on toadlets from the genusCapensibufo. Cressey et al. (2015) that are no longer in many of the places where they were found in the 1970s. We have also seen general analyses on the assemblage frogs in this area from Mokhatla et al. (2015).

Is climate to blame for enigmatic declines?

It is very hard to know whether climate change alone is reducing the overall numbers of frogs in the fynbos. But this does underline the importance of monitoring populations of the species that remain. Very few areas are being monitored, but we appreciate that there is a need for long term support for initiatives that are collecting data (Measey et al.2019).

See the recent blog posts (hereandhere) to find out more about how the MeaseyLab is attempting to monitor populations of frogs by usingaSCRto quantify the density of calling males.

Literature

Angulo, A., Hoffmann, M. & Measey, G.J. (2011). Introduction: Conservation assessments of the amphibians of South Africa and the world. In: Ensuring a future for South Africa’s frogs: a strategy for conservation research. (ed. G.J. Measey), pp. 1-9. SANBI Biodiversity Series 19. South African National Biodiversity Institute, Pretoria.

Colville, J.C., Potts, A.J., Bradshaw, P.L., Measey, G.J., Snijman, D., Picker, M.D., ProcheŞ, S., Bowie, R.C.K. and Manning, J.C. (2014) Floristic and faunal Cape biochoria: do they exist? In: Fynbos: ecology, evolution, and conservation of a megadiverse region. (eds. Allsopp, N., Colville, J.F., Verboom, G.A.) pp 73-93. Oxford University Press.

Cressey, E.R., Measey, G.J., & Tolley, K.A. (2015). Fading out of view: the enigmatic decline of Rose’s mountain toad Capensibufo rosei. Oryx, 49, 521–528.https://doi.org/10.1017/S0030605313001051

Measey, J., Tarrant, J., Rebelo, A.D., Turner, A.A., Du Preez, L.H., Mokhatla, M.M., Conradie, W. (2019) Has strategic planning made a difference to amphibian conservation research in South Africa? African Biodiversity & Conservation - Bothalia 49(1), a2428.https://doi.org/10.4102/abc.v49i1.2428

Mokhatla, M. M., Rödder, D. & Measey, G.J. (2015) Assessing the effects of climate change on distributions of Cape Floristic Region amphibians. South African Journal of Science;111, 2014-0389.https://doi.org/10.17159/sajs.2015/20140389