Being aware that you can get it wrong

With all the will in the world, when you are testing your hypothesis using statistics there is a chance that you will accept your alternative hypothesis when it is not valid. This is known as a ‘false positive’ or aType I error. It is also possible that you will accept your null hypothesis when you should have accepted your alternative hypothesis, also known as aType II error,or a ‘false negative’. While it won't be any fun to get a Type II error, as scientists we should be more worried about Type I errors and the way in which they occur. This is because following a positive outcome, there is more chance that the work will be published, and that others may then pursue the same line of investigation mistakenly believing that their outcome is likely to be positive. Indeed, there are then lots of ways in which researchers may inadvertently or deliberately influence their outcomes towards Type I errors. This can even become a cultural bias that then permeates the literature.

Humans have a bias towards getting positive results (Trivers 2011). If you’ve put a lot of effort towards an experiment, then when you are interpreting your result you might feel motivated towards your reasoning making you more likely to accept your initial hypothesis. This is called ‘motivated reasoning’, and is a rational explanation why so many scientists get caught up in Type I errors. This is also known as a confirmation bias and we will discuss it in more detail elsewhere on this blog (see here). Another manifestation of this is publication bias which also tends to be biased towards positive results (see here). Put together this means that scientists being human are more vulnerable to making Type I errors than Type II errors when evaluating their hypotheses. It is important to understand that simply by chance you can make a Type I error, accepting your alternative hypothesis even when it is not correct.

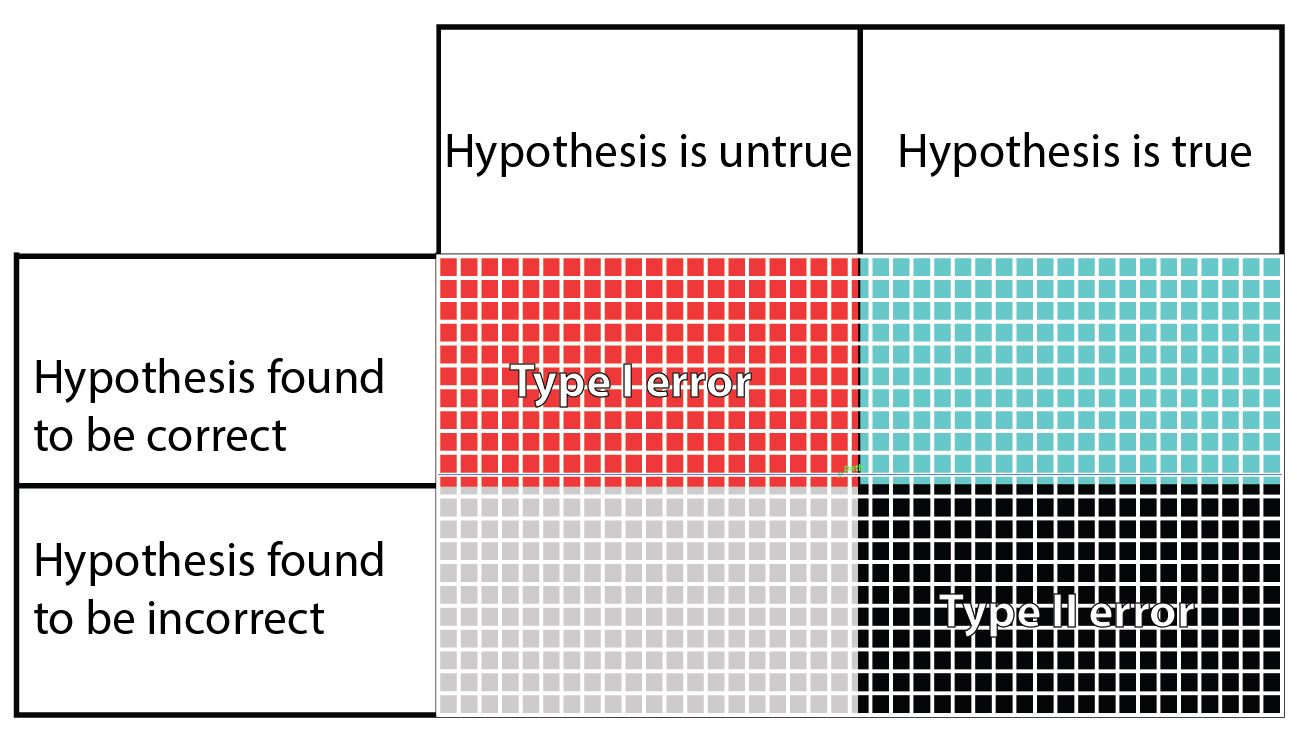

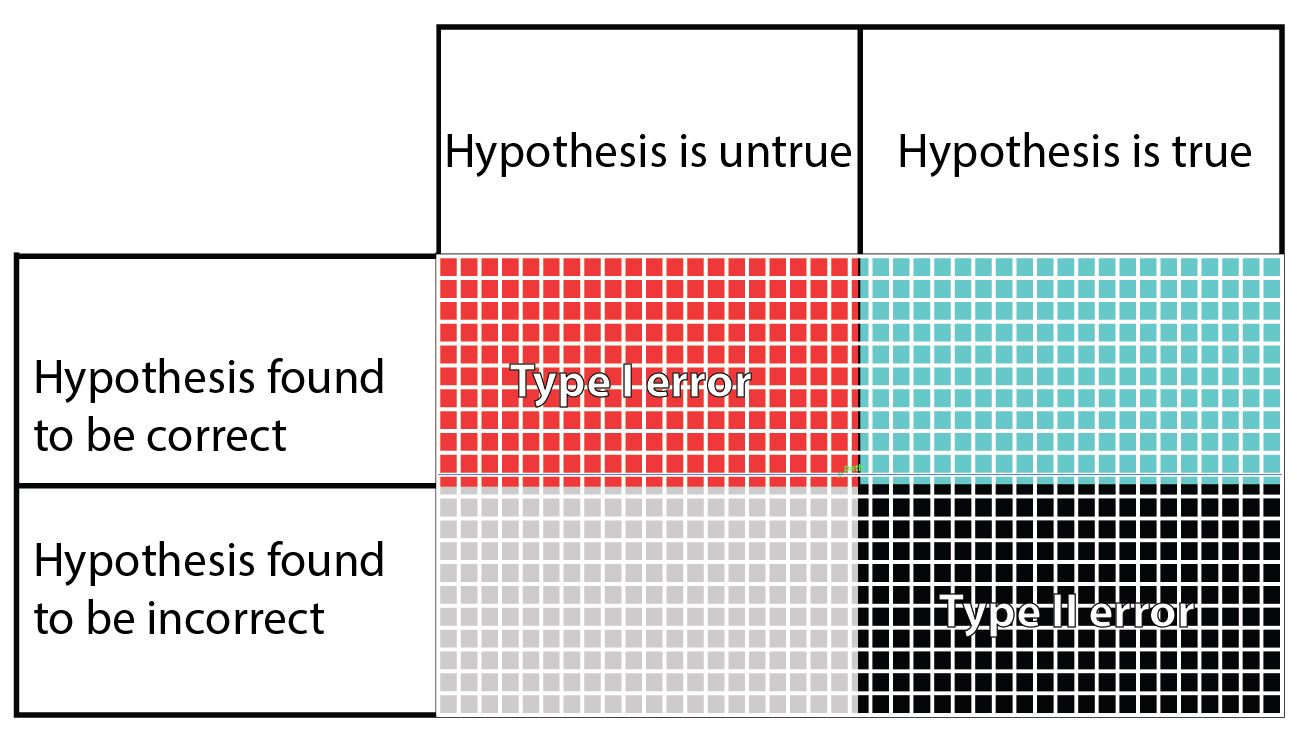

In this table, the columns refer to the truth regarding a hypothesis, even though the truth is unknown to the researcher. The rows are what the researcher finds when testing their hypothesis. The blue squares is what we are hoping to achieve when we set and test our hypothesis. The grey squares may happen if the hypothesis we set is indeed false. The other two possibilities are the false positive Type I error (red), and the false negative Type II error (black). In the table it seems that the chances of getting one of the four outcomes is equal, but in fact this is far from reality. There are several factors that can change the size of each potential outcome to testing a hypothesis.

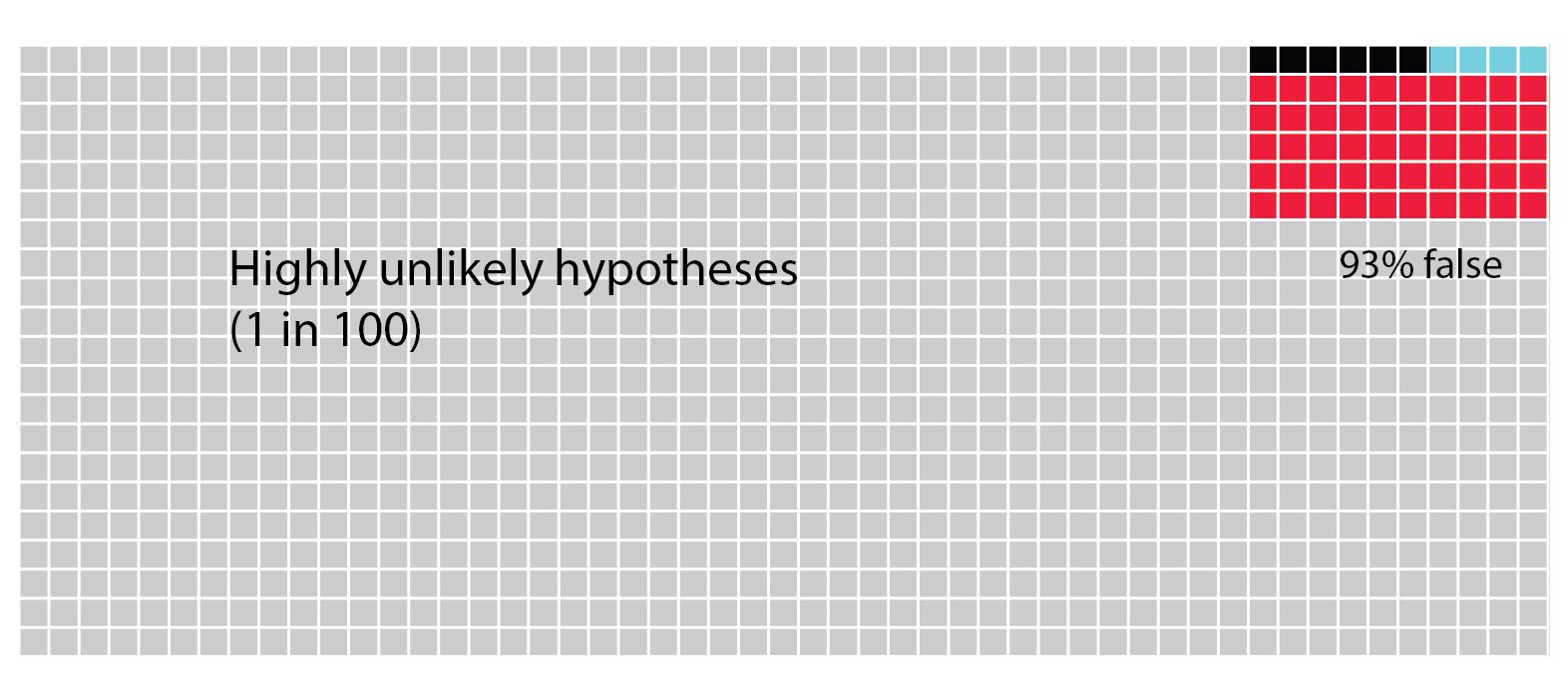

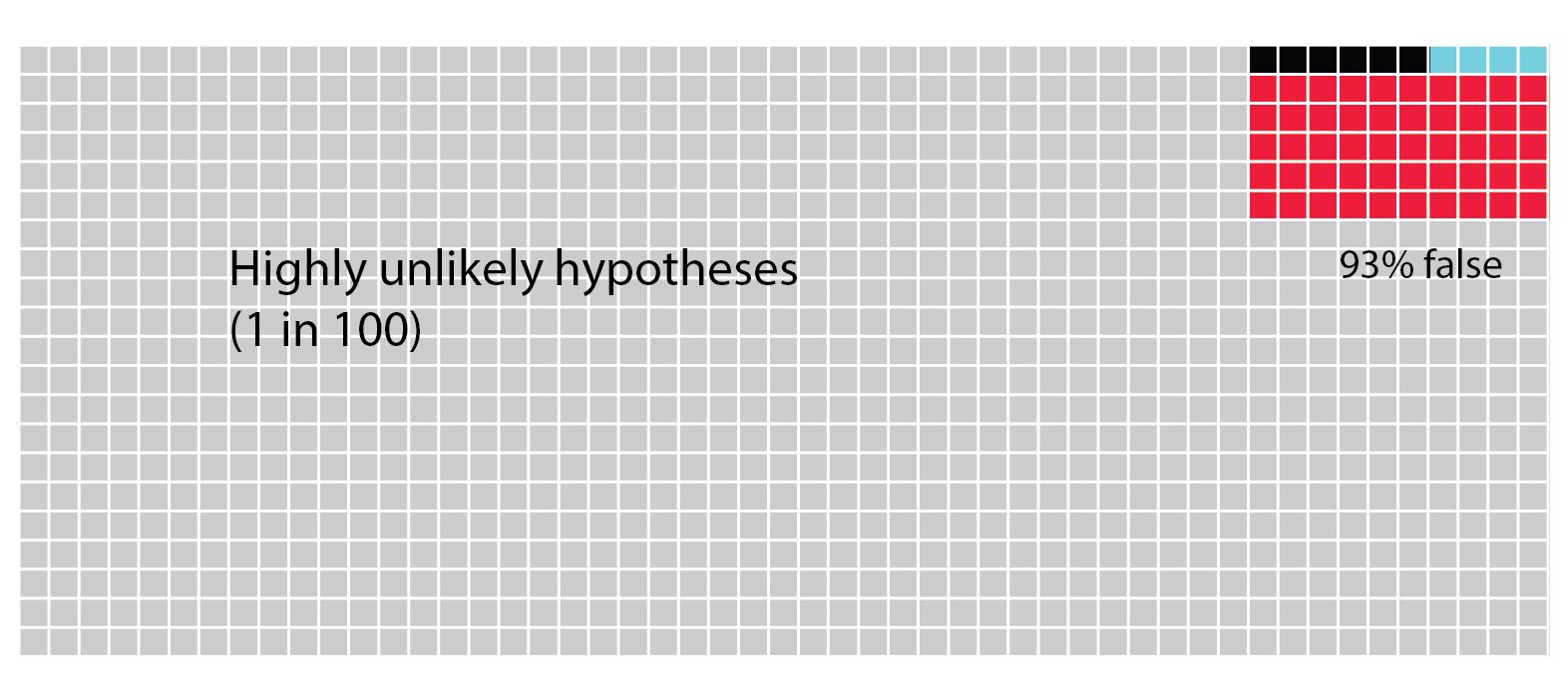

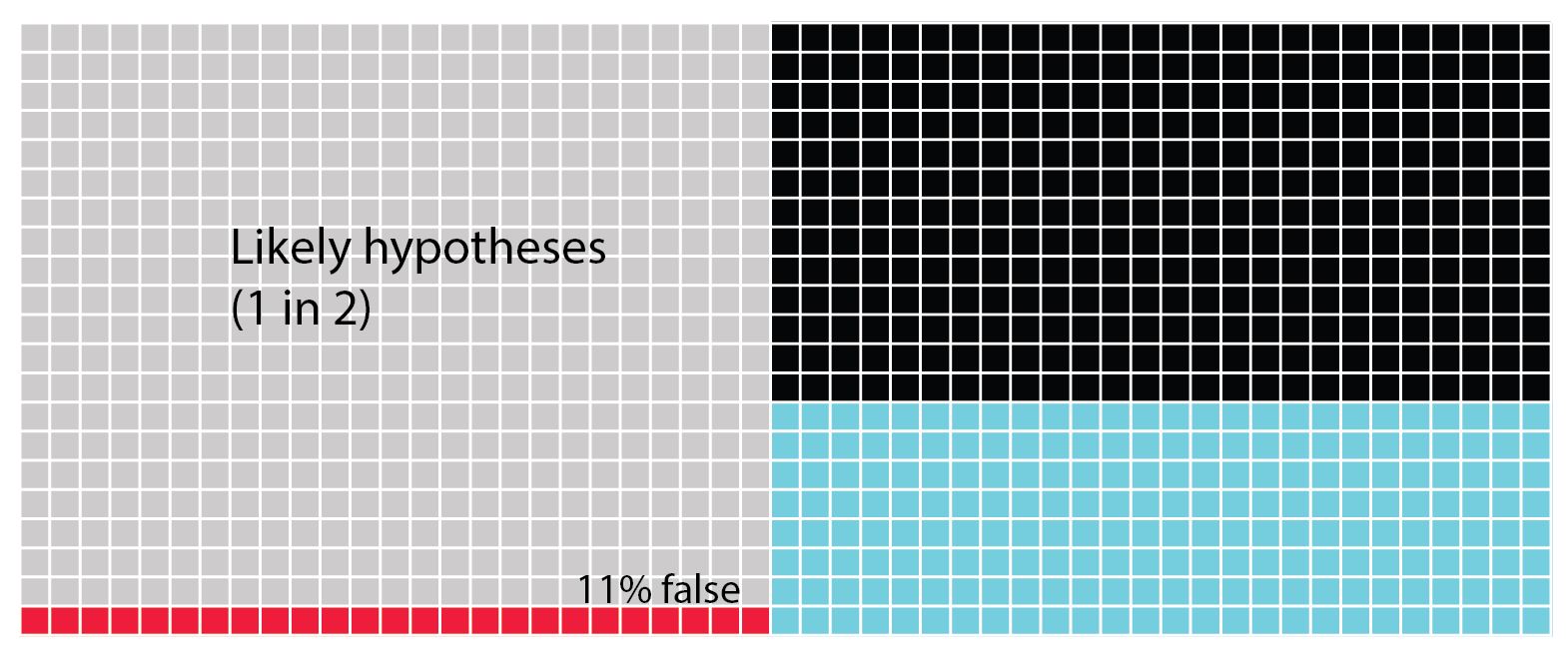

The following figure has been redrawn after figure 1 in Forstmeier et al (2017). This is a graphical representation of an argument first made by Ioannidis (2005). Each figure shows 1000 hypotheses. You could think of these as outcomes of 1000 attempts at testing the same hypothesis, or as a more global scientific effort of testing lots of hypotheses all over the world. The difference between the figures is the likelihood that the hypotheses are correct changes. In the first one we see highly unlikely hypotheses that will only be correct one in a hundred times. The blue squares denote the proportion of times in which the hypotheses are tested and found to be true. The black squares denote false negative findings, i.e a Type II error. The red squares denote false positive findings, i.e. a Type I error. Because the hypothesis is highly unlikely to be correct the majority of squares are light grey denoting that it was correctly found to be untrue. Although it might seem unlikely that anyone would test such highly unlikely hypotheses, there are increasing numbers of governments around the world that create incentives for researchers to investigate what they term blue skies research, which might be better termed high risk research or investigations into highly unlikely hypotheses. The real problem with such hypotheses is that you are more likely to get a Type I error than actually find that your hypothesis is truly correct.

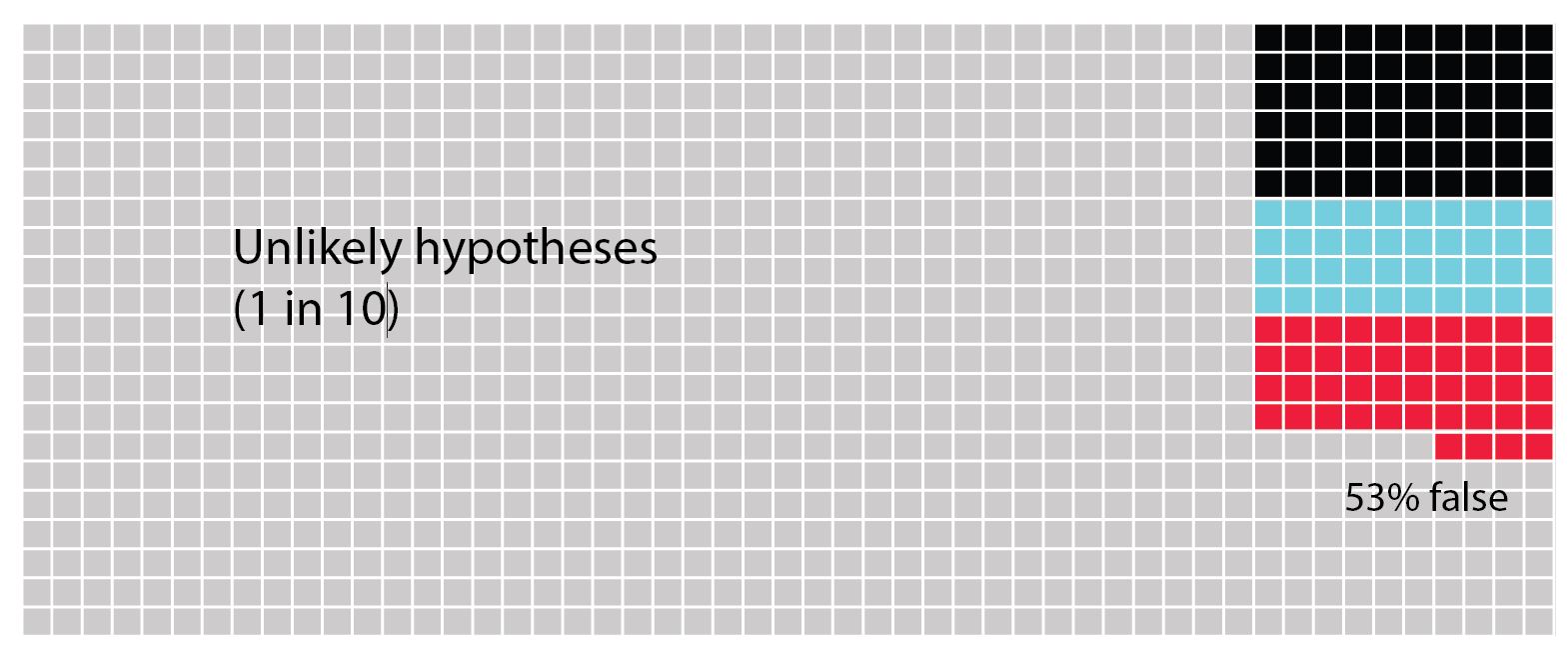

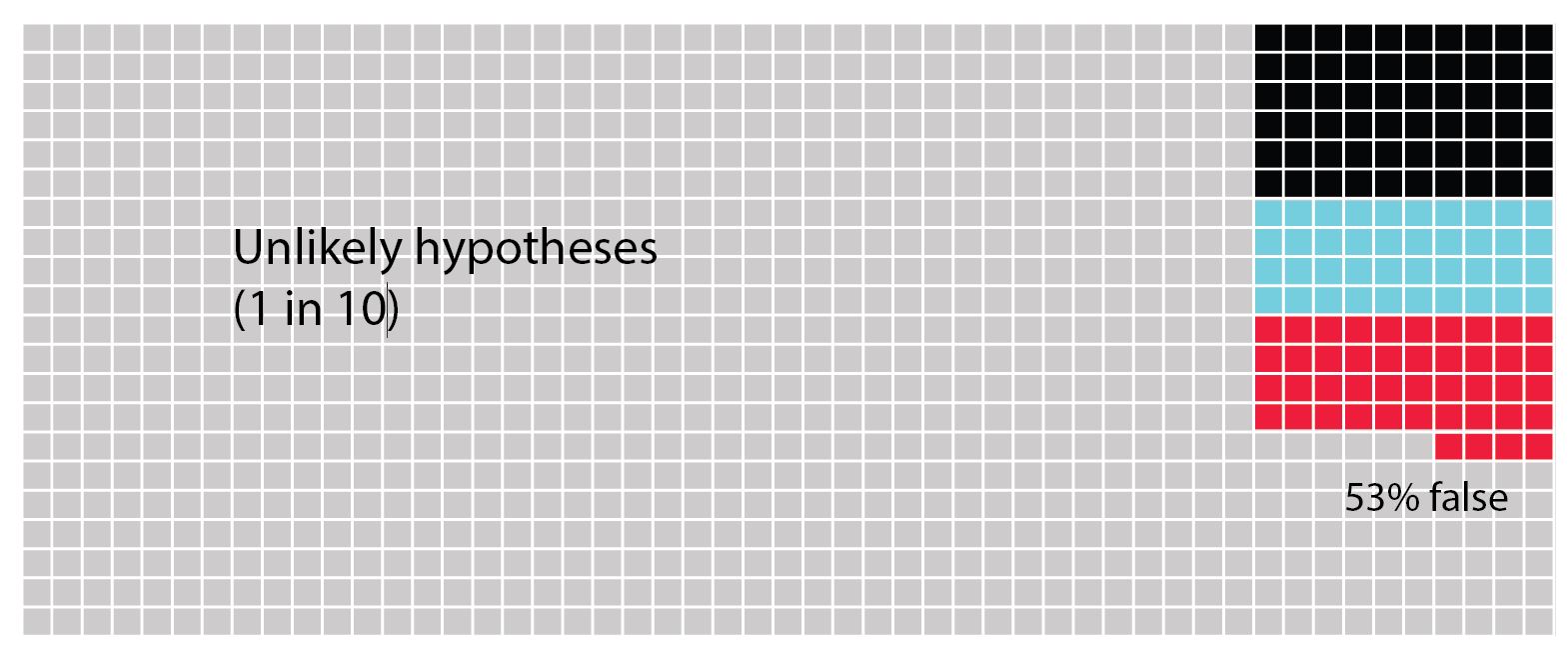

In the next figure we see a scenario of unlikely hypotheses that are found to be correct approximately one in 10 times. Now we see that the possibility of committing a Type I error is roughly equivalent to a Type II error and to the probability of finding that the hypothesis is truly correct. Thus, if your result comes out positive, you are unlikely to know why.

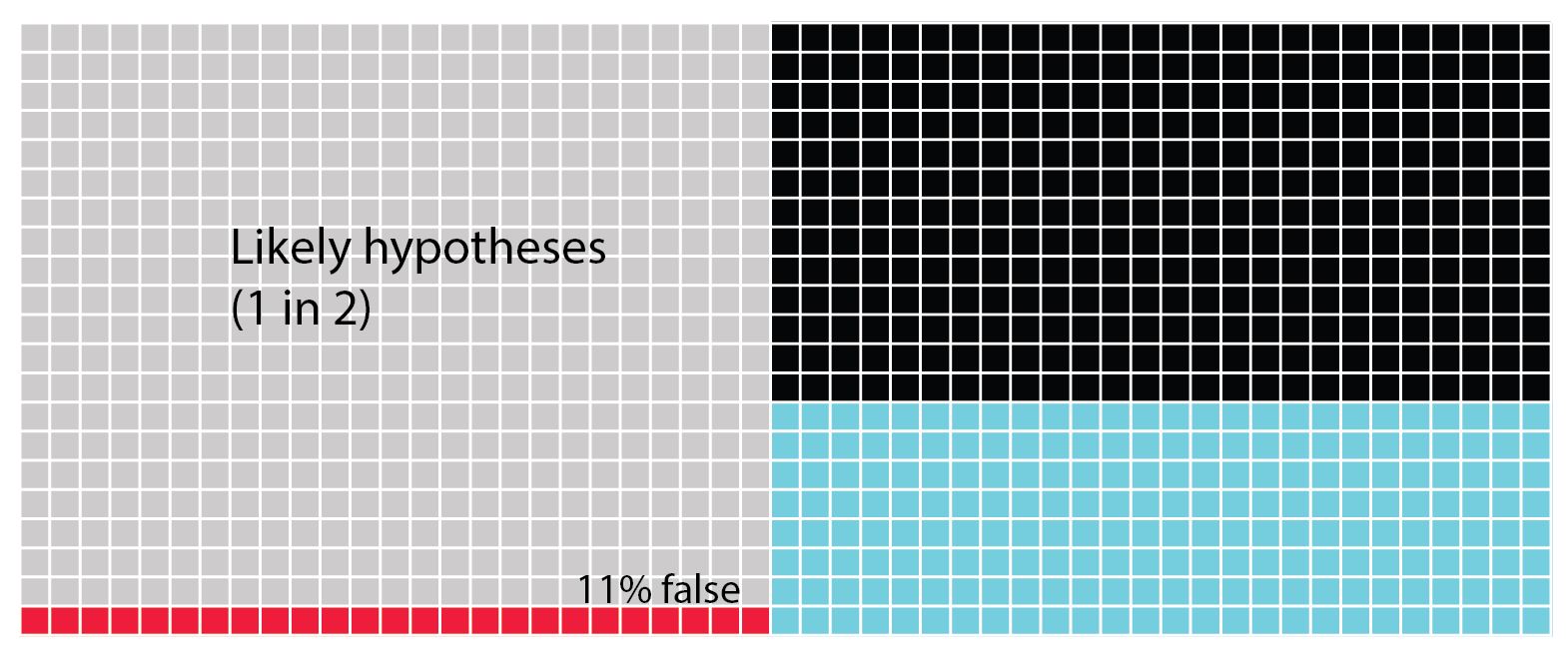

Lastly we see the scenario in which the hypotheses are quite likely to be correct one in two times. Now we can see that the possibility of creating a Type II error is highest. Next the blue squares show us the chances that we find the hypothesis is truly correct. Lastly, there's only 11% chance of a type 1 error.

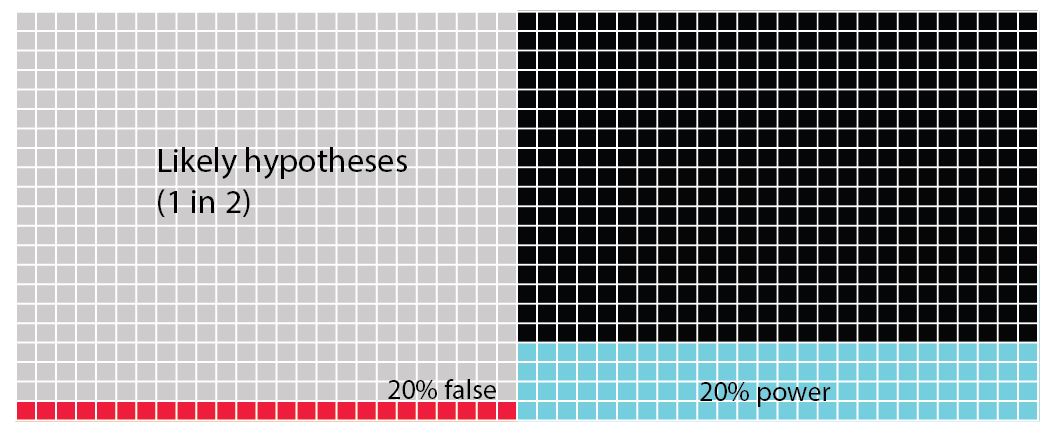

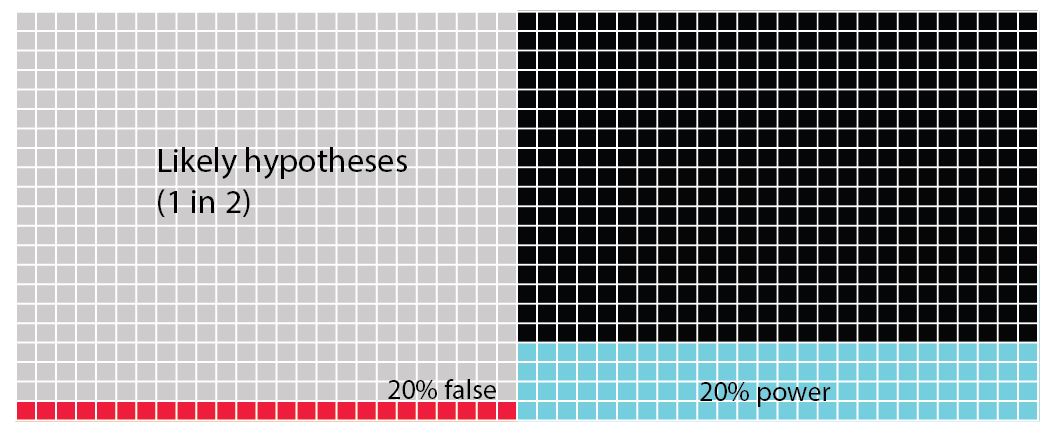

In all of these figures (above) the power of the analysis is set at 40%. The statistical power of any analysis depends on the sample size (or number of replicates) you're able to use. Some research has suggested that in most ecological and evolutionary studies this is actually more like 20% (see references in Forstmeier et al 2017). What is important to notice in the next figure (below) is that when we change the power of the analysis (in this case from 40% to 20%) we influence the proportion of Type II errors over finding that the hypothesis is correct. While the overall numbers of Type I errors does not change, if your analysis tells you to accept your alternative hypothesis, there is now a 1 in 5 chance (20%) that it will be a false positive (Type I error).

What you should see when you look at these figures is that there is quite a high chance that we don't in fact correctly assign a true positive hypothesis. There's actually much more chance that we will commit a Type II error. Worse the more unlikely a hypothesis is, we are increasingly likely to commit the dreaded Type I error.

From the outset we should try to make sure that the hypotheses you are testing in your PhD are very likely to find positive results. In reality, this means that they are then iterations of hypotheses that are built on previous work. When you choose highly unlikely hypotheses, you need to be aware that this dramatically increases your chances of making a Type I error. The best way to overcome this is to look for multiple lines of evidence in your research. Once you have the most likely hypothesis that you can produce, you need to crank up your sampling so that you increase the power of your analysis, avoiding Type II errors.

References

Forstmeier, W., Wagenmakers, E.J. and Parker, T.H., 2017. Detecting and avoiding likely false‐positive findings–a practical guide.Biological Reviews,92(4), pp.1941-1968.

Ioannidis, J.P., 2005. Why most published research findings are false.PLoS medicine,2(8), p.e124.

Trivers, R., 2011. The folly of fools New York.NY: Basic Books.